Doctor's Orders

The Surgeon General has the cure for free speech.

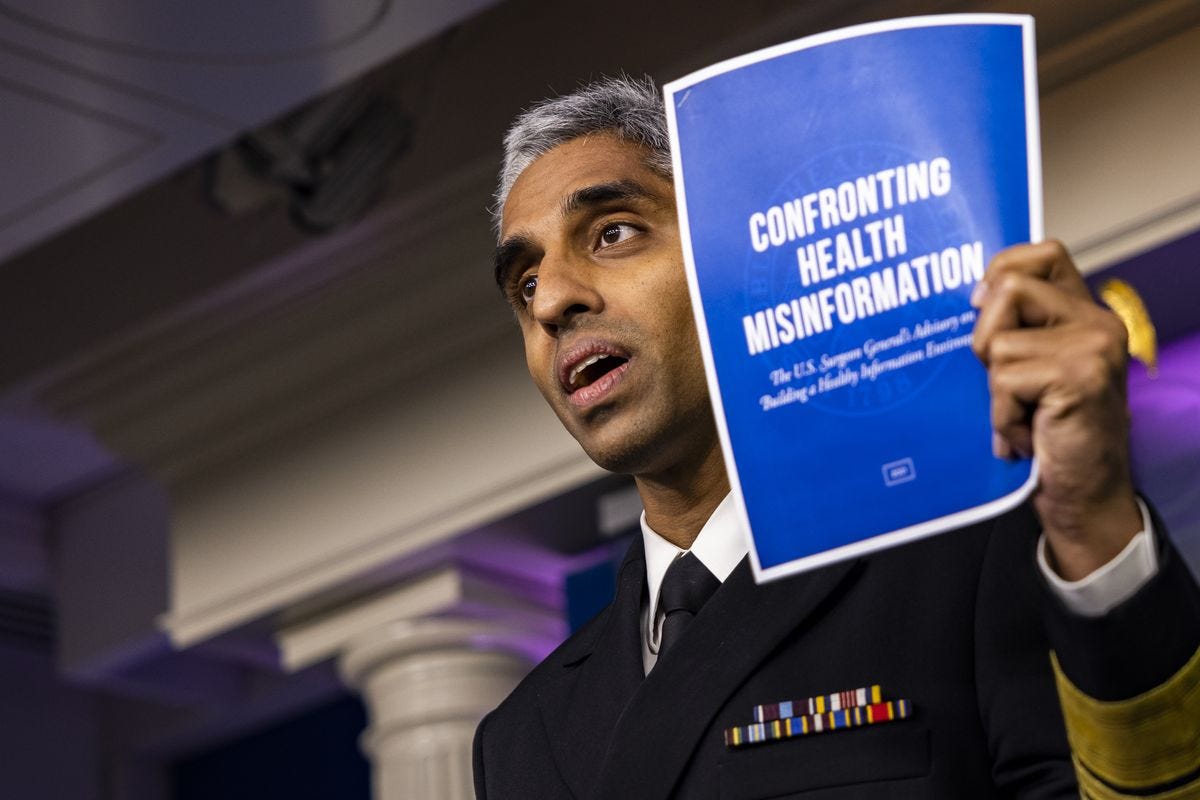

Yesterday, US Surgeon General Vivek Murthy released a public advisory that is — there’s really no better phrase for it — creepy as fuck.

The advisory takes aim at the ubiquity of “health misinformation” online, which the Surgeon General equates to a pandemic in terms of the scale of the threat it poses to the public. He’s even adopted a new word for it just to make the point unmistakably clear: “infodemic.”

This infodemic, Murthy’s memo tells us, is spread when populations that have not yet been treated with the proper “inoculation methods” are “exposed” to dangerous health misinformation at the hands of “super-spreaders.” The advisory lays out ways individuals, families, communities, educators, health professionals, journalists, tech platforms, researchers, funders, foundations, and government agencies can take measures to stop the transmission. It is up to “every single person” in “every sector of society,” the Surgeon General declares, like a latter-day Winston Churchill, to combat the global infodemic. We need nothing less than a “whole-of-society effort” to defeat it.

The aim, obviously, is to get more Americans to take the Covid vaccines. Currently less than sixty percent of Americans are fully vaccinated, and Covid case numbers are surging in almost every state in the country. The administration chalks this failure up to online conspiracy theorists. At a press conference to roll out the report, Press Secretary Jen Psaki referred to “twelve people who are producing 65% of anti-vaccine misinformation on social media platforms.”

That figure comes from a report by the Center for Countering Digital Hate called “The Disinformation Dozen,” which names the top purveyors of anti-vaxx hysteria online and calls for their removal from social media platforms. CCDH is a DC- and London-based NGO, which appears to be staffed by a single person, Imran Ahmed. Its website features a number of campaigns aimed at combating perpetrators of alleged digital toxicity, from anti-vaxx promoters to sexual harassers. Nearly every one of them calls for social media platforms to either “deplatform” or demonetize the transgressors. One particularly ambitious demand proposes that all of the social media platforms ban anyone who’s ever said anything racist online for life.

CCDH’s website describes “two parallel pandemics. One is biological: Covid-19; the other is social: misinformation.” The group’s blunt prescription for the latter problem — online censorship — is reflected in the Surgeon General’s advisory. The advisory calls for tech platforms to “impose clear consequences” for misinformation “super-spreaders” on social media. Though it leaves unspecified what those “consequences” should be, the first use of the word “super-spreader” in the memo is footnoted to CCDH’s report — and the CCDH, which doesn’t have to stay up at night worrying about the First Amendment, is not nearly as coy as the U.S. government about what should be done with these perpetrators.

While those twelve super-spreaders may be the kingpins of the misinformation trade, the Biden administration’s deplatforming campaign is no mere decapitation operation. In her press conference with Murthy, Jen Psaki called directly for Facebook to “remove violative posts” wholesale, whichever suburban grandma they’re coming from. She even boasted that the Biden administration is “flagging problematic posts for Facebook.”

Psaki referred to the administration’s publicly promulgated proposals of the tech platforms as “asks.” But an ask from the President of the United States isn’t like an ask from a user, or even from a board director — and particularly not an ask from President Joe Biden. In his endorsement interview with the New York Times editorial board, then-candidate Biden told the editors that he has “never been a fan of Facebook...never been a big Zuckerberg fan.” A few seconds later he called for Section 230 of the Communications Decency Act, which immunizes tech platforms from legal liability for the content of posts by its users, to be “revoked, immediately.” Section 230 repeal is an existential threat to Facebook and most of the social media platforms. With that threat looming behind the administration’s requests, Psaki’s “asks” are about as polite as the Mafia’s.

The administration isn’t really asking. It’s telling.

I’m no libertarian. I’m not going to shed a tear for Mark Zuckerberg and the poor tech oligarchs. But, like it or not, we all now hold a stake in what happens to the social media platforms. Even if you’ve never had a social media account and never use the internet and live in a small town in the countryside, you still have a stake in it, because at this point, it’s our national public square. Essentially all political speech now takes place online, so the rules that the platforms lay down regarding what you can and cannot say are, in effect, laws. When it comes to speech, the tech companies are our government.

Ever since Trump took office, Democrats have been reciting libertarian talking points, explaining with a sigh to those decrying the tech platforms’ censorship of Trump-loving conspiracy nuts like Alex Jones that the First Amendment does not apply to private corporations. Tech companies can boot whoever they want off their platforms for speech code violations. They can censor whatever political speech they find objectionable — no harm, no foul. The First Amendment only applies to the government silencing expression, and the government has nothing to do with these private transactions between customers and their service providers.

It’s a convenient argument for tech companies that wish to censor their users — and as it turns out, it’s an even more convenient argument for the federal government. If anti-vaxxer Robert F. Kennedy, Jr. was standing on a park bench in Dupont Square screaming about the dangers of vaccines, politicians wouldn’t be able to do a thing about it. But if Robert F. Kennedy Jr. was in a Starbucks, or in the food court of a shopping mall, or on a private digital platform, well then suddenly he would need someone’s permission. That someone might not be the government, per se, but it might be someone who cares about staying in the good graces of the people who run the government. And at the end of the day, that might amount to the same thing.

You might not really care if Robert F. Kennedy or Alex Jones is gagged by the tech platforms. On its face, neither do I. But I care about where this road leads. I care, for instance, if Bret Weinstein is kicked off of YouTube for talking about Ivermectin. And whatever you think of Bret Weinstein or Ivermectin, you should, too.

If you have no idea what I’m referring to, here’s a quick summary: Bret Weinstein is an evolutionary biologist with an extremely popular podcast that used to be up on YouTube. Weinstein believes that the anti-parasitic drug Ivermectin is an extremely effective prophylactic and medication for Covid-19. He also believes that the mRNA vaccines, while also extremely effective, have unknown long-term risks. He has discussed, and advocated for, Ivermectin on air. For that, he found himself in violation of YouTube’s “Covid-19 misinformation policy,” and was kicked off the platform.

I’m no scientist; I’m just a podcast listener. I like Bret’s show and I find myself persuaded by his arguments in favor of Ivermectin. But what do I know? He could be entirely wrong; he could even be bullshitting me. I could be utterly misled. But that’s neither here nor there, because I don’t defend his right to say what he says on YouTube because I’m certain it’s the truth. Nobody’s certain of that — not even Bret. I defend his right to say what he says, first, because it’s a founding principle of our democracy, and second, because his advocacy of this alternative treatment is an appropriate and necessary part of the scientific process. Bret’s confidence in Ivermectin is not based on astrology; it’s based on empirical studies conducted across the world, and results reported from the field. Those studies and results could be totally bunk — I’m not qualified to judge. But those who are qualified to judge should be able to listen to the evidence he presents, and be persuaded by it, or not, and if not, to find the data to debunk it, and present it publicly. This is the scientific process. Whether right or wrong, Bret’s views are as valuable to that dialectic process as those of scientists who diametrically oppose him. It would be outrageous if YouTube’s executives woke up one day and decided that Ivermectin was a miracle drug, and the censored anyone who disagreed with that position. It’s equally outrageous that they’re doing precisely the inverse.

YouTube’s policy bans any speech about Covid-19 that contradicts the opinions of the World Health Organization. The WHO has a lot of scientists in it, but it’s not a scientific entity. Even if it were a scientific entity, that still wouldn’t put its determinations beyond public scrutiny — but it’s not even that. It’s a transnational government agency for public health, which makes it a political institution. So in effect, YouTube is declaring that because a certain political authority has declared the facts to be one thing, the rest of us have no right to question it. That is YouTube’s policy on Covid-19, explicitly.

Maybe that doesn’t sound that bad to you. Perhaps when you think of the WHO, you imagine a crack team of brilliant, selfless, morally irreproachable heroes. Who wouldn’t want to heed their advice to the exclusion of anyone else’s? But let’s play out the logic of YouTube’s policy a few steps. Imagine a world in which YouTube deferred not to the WHO, but to the Biden administration to determine what was scientific fact and what was not, and censored anyone with a dissenting opinion. It’s their prerogative, after all, as a private corporation. Even worse, imagine a world in which, in the name of combating “misinformation,” YouTube deferred to the Biden administration on what was and was not non-scientific fact (after all, concerns about online misinformation over the last five years have hardly been confined to medicine and science). Imagine that on questions related to foreign policy, or the unemployment rate, or the effects of a proposed bill in Congress, YouTube simply banned anyone who disagreed with the government’s official position, writing any dissent off as conspiracy theory. And if that isn’t bad enough, imagine a world in which YouTube deferred to the Trump administration on the same questions, or a George W. Bush administration, or a future President Tom Cotton administration, and censored its users accordingly. That might sound insane, but that’s the road we’re headed down.

Now, granted, such a bald end run around the First Amendment might not get past the first federal judge to weigh its constitutionality. But if YouTube’s current policy is constitutional, then all a given president would need to do is to point to some transnational authority that happens to share the same opinion. Maybe one day Biden decides to revive the zombie corpse of the Trans-Pacific Partnership for the umpteenth time, and a week later, the World Trade Organization releases a study showing that the TPP will lead to economic prosperity from New York to Jakarta. Then, in the name of combating the infodemic of “economic misinformation,” YouTube bans any videos that discuss the harms the TPP will inflict on workers, consumers and the environment.

Does that sound like a functional democracy to you? If not, then how, exactly, is that scenario meaningfully different from what YouTube is currently doing vis-a-vis discussions of Ivermectin?

Back in April, I wrote a piece for Glenn Greenwald’s substack on a bill before Congress to meet the ostensible twin threats of political extremism and domestic terrorism, made so dramatically evident by the January 6 “insurrection.” Like the PATRIOT Act, the bill aims squarely at the most cartoonishly unsympathetic actors in our political imagination — back then, it was Islamic terrorists; today, it’s “white supremacists.”

But just as the PATRIOT Act was deployed, in no time at all, against antiwar protestors and other purely political targets, it’s already clear that the application of the Domestic Terrorism Prevention Act of 2021, should it pass, will not be limited to neo-Nazis. In a memo last March, Biden’s intelligence agencies pointed to animal rights activists, environmental activists, abortion activists on both sides of the issue, and “anti-authority” groups, including anarchists, as “domestic violence extremists” of concern to federal law enforcement.

It shouldn’t be hard to discern the same bait-and-switch game in the administration’s proposed “whole-of-society” mobilization against the health misinformation “infodemic.” It’s as hard to argue against an effort to get more people vaccinated against a pandemic as it is to argue against shutting down Islamic terrorism cells, or white nationalist militias — which is exactly the point of invoking them. But consider the enduring legal and bureaucratic architecture whose construction we’re being asked to cheer on. Even aside from the naked censorship objectives the Biden administration is pursuing with this initiative, the Surgeon General is asking tech companies to turn over more user data to “researchers,” hire more people and improve artificial intelligence to monitor users more expansively in multiple languages, and to do real time monitoring of livestreams. In other words, the Biden administration wants even more surveillance online, for the purpose of finding and shutting down speech that the government and its supporters in civil society deem to be untrue or misleading.

When it comes to the narrow issue of vaccines, reasonable people could easily argue that the upside of such an effort, in terms of lives saved, will far outweigh any hypothetical downsides. But only the most credulous among them would dismiss the notion that new powers, once granted, tend to be used in off-label ways. If you’re skeptical of that skepticism, just look up the history of the PATRIOT Act. It doesn’t require a whole lot of political imagination to ponder the question: What could possibly go wrong?

My favorite aspect of this week's, um, kerfuffle, is that state and corporate cooperation to censor citizens is part of the dictionary definition of literal fascism.

The thing is, online nuts aren't convincing me to avoid the vax for now, this Biden admin is! Their messaging from day one has been atrocious.